More than 400 million people speak Arabic. Yet until now, the artificial intelligence models built for them have lacked the rigorous, standardised evaluation that’s been commonplace for English and other major languages for years. That gap is closing.

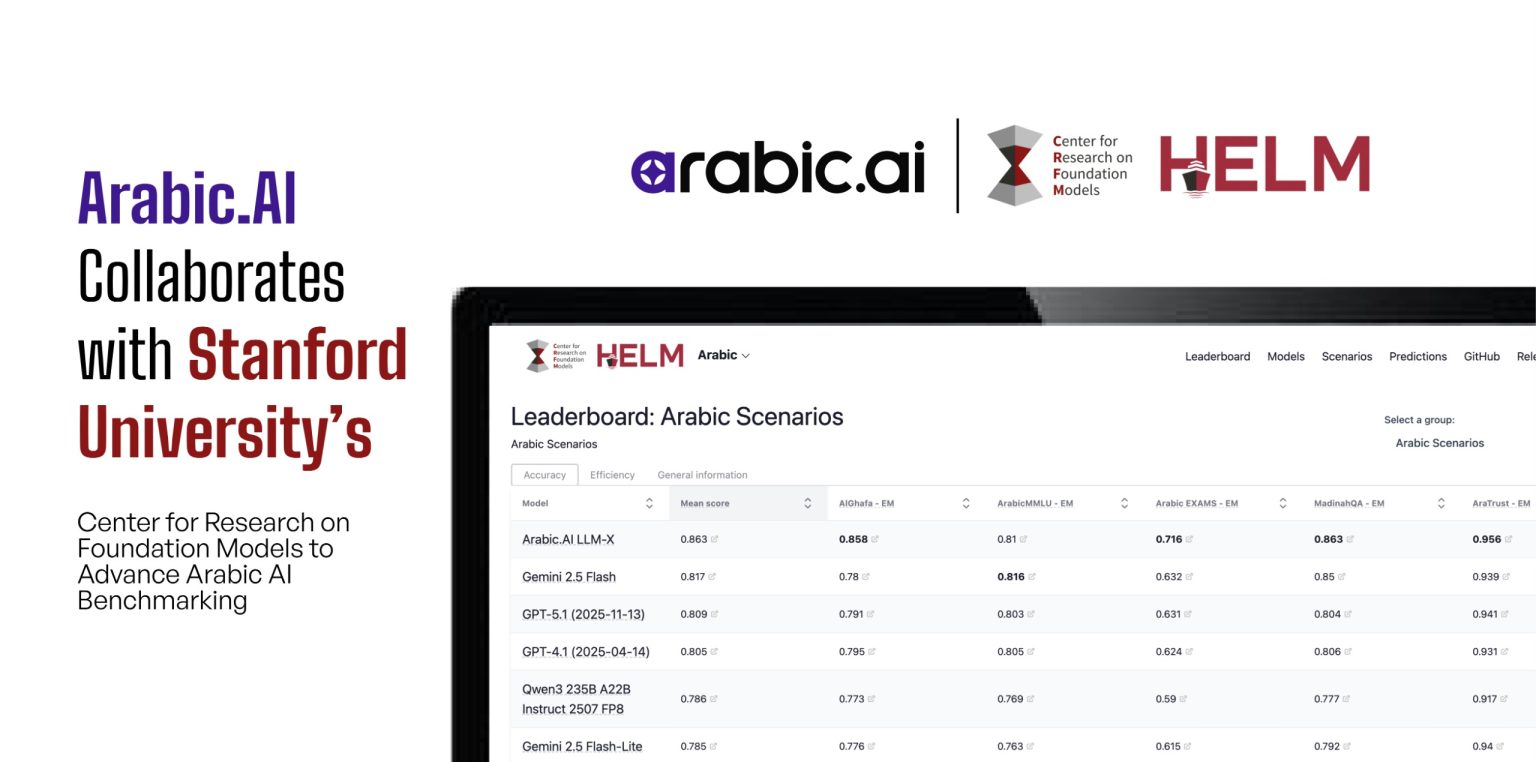

A collaboration between Dubai-based Arabic.AI and Stanford University’s Center for Research on Foundation Models has just completed its first phase: an Arabic extension of HELM, the influential benchmarking framework that’s become a trusted reference point in AI research circles. The result is the first holistic leaderboard for Arabic large language models, giving researchers and enterprises a transparent way to compare performance across different systems.

For an industry that measures everything—accuracy rates, response times, reasoning capabilities—the absence of such tools for Arabic has been conspicuous. Stanford’s CRFM pioneered HELM as an open-source platform designed to assess not just what foundation models can do, but where they fall short and what risks they carry. Extending that framework into Arabic means the language now gets the same level of scrutiny that’s shaped development in other linguistic markets.

The partnership pairs Stanford’s benchmarking expertise with Arabic.AI’s practical experience building Arabic-first models. The Dubai firm has developed two large language models—LLM-X, its flagship system, and LLM-S, a smaller variant—that rank among the most advanced in the Arabic AI space. But even for a company with commercial models in the market, the collaboration serves a broader purpose.

“Arabic is spoken by more than 400 million people, yet it has historically been underserved in AI benchmarking,” said Nour Al Hassan, CEO of Arabic.AI. “This collaboration with Stanford’s CRFM ensures that Arabic is evaluated with the same rigor, transparency, and visibility as other global languages. It is a step forward not just for Arabic.AI, but for the entire Arabic AI community.”

That framing—public good over competitive advantage—reflects an unusual dynamic. Arabic.AI stands to benefit from credible benchmarks that showcase its own models’ strengths. Yet the leaderboard will assess any Arabic LLM, creating a level playing field that could just as easily highlight competitors.

What’s been completed so far matters. The Arabic leaderboard is live, built atop the HELM framework. New evaluation methods tailored for conversational AI in Arabic have been developed and integrated. Researchers can now examine how different models handle the language’s morphological complexity, its diverse dialects, and the cultural context that shapes meaning.

The timing is revealing. January 2026 arrives years into the generative AI boom that’s reshaped entire industries. English-language models have been stress-tested, scrutinised, and stacked against one another on countless leaderboards. Arabic, despite its hundreds of millions of native speakers, has mostly watched from the periphery.

That’s changing, albeit gradually. Enterprises operating across the Middle East and North Africa have grown increasingly cautious about adopting AI systems without clear performance data. A marketing team in Riyadh or a customer service operation in Cairo can’t rely on anecdotal claims or vendor promises. They need benchmarks.

Stanford’s involvement lends weight. CRFM has built a reputation for transparent, reproducible evaluation—the kind that withstands academic scrutiny and influences product development. By attaching the HELM brand to Arabic benchmarking, the centre signals that the language warrants serious attention.

For Arabic.AI, the collaboration aligns with a stated mission to advance Arabic AI infrastructure beyond its own product line. The firm positions itself as both a commercial player and a contributor to the broader ecosystem, a balance that requires offering tools and frameworks that competitors can use.

The first phase provides the foundation. An Arabic leaderboard exists. Evaluation methods are documented. The infrastructure is in place for ongoing assessment as new models emerge and existing ones improve.

What comes next remains less defined. Benchmarking is iterative—frameworks evolve as models grow more capable and as researchers identify new dimensions worth measuring. The conversational AI evaluations completed in this phase represent one slice of what matters. Question-answering, reasoning, content generation, cultural appropriateness, dialect handling—each demands its own metrics.

Still, the absence has been addressed. Arabic now has the measuring stick it lacked, built by researchers who understand both the language’s complexity and the standards that credible evaluation requires. Whether that accelerates Arabic AI development or simply makes existing gaps more visible will become clear as enterprises and research teams begin using the tools.

The leaderboard and evaluation methods are accessible through Stanford’s CRFM website, part of the centre’s broader HELM platform. Open access means any research group or company can assess models, compare results, and contribute findings—an approach that treats benchmarking as shared infrastructure rather than proprietary insight.

For a language spoken from Morocco to the Gulf, from Cairo to Baghdad, the implications extend beyond technical performance metrics. AI systems shape how people access information, conduct business, and interact with technology. When those systems lack rigorous evaluation, the 400 million speakers relying on them are left navigating uncertainty.

That uncertainty, at least in one domain, has just diminished. The tools exist. The leaderboard is live. What Arabic AI does with that foundation will unfold over the months ahead.